Some thoughts on social media, disinformation, and the 2024 elections

Multiple factors make data-driven research of social media activity more difficult than in recent election cycles, which empowers people and organizations who manipulate platforms for political gain

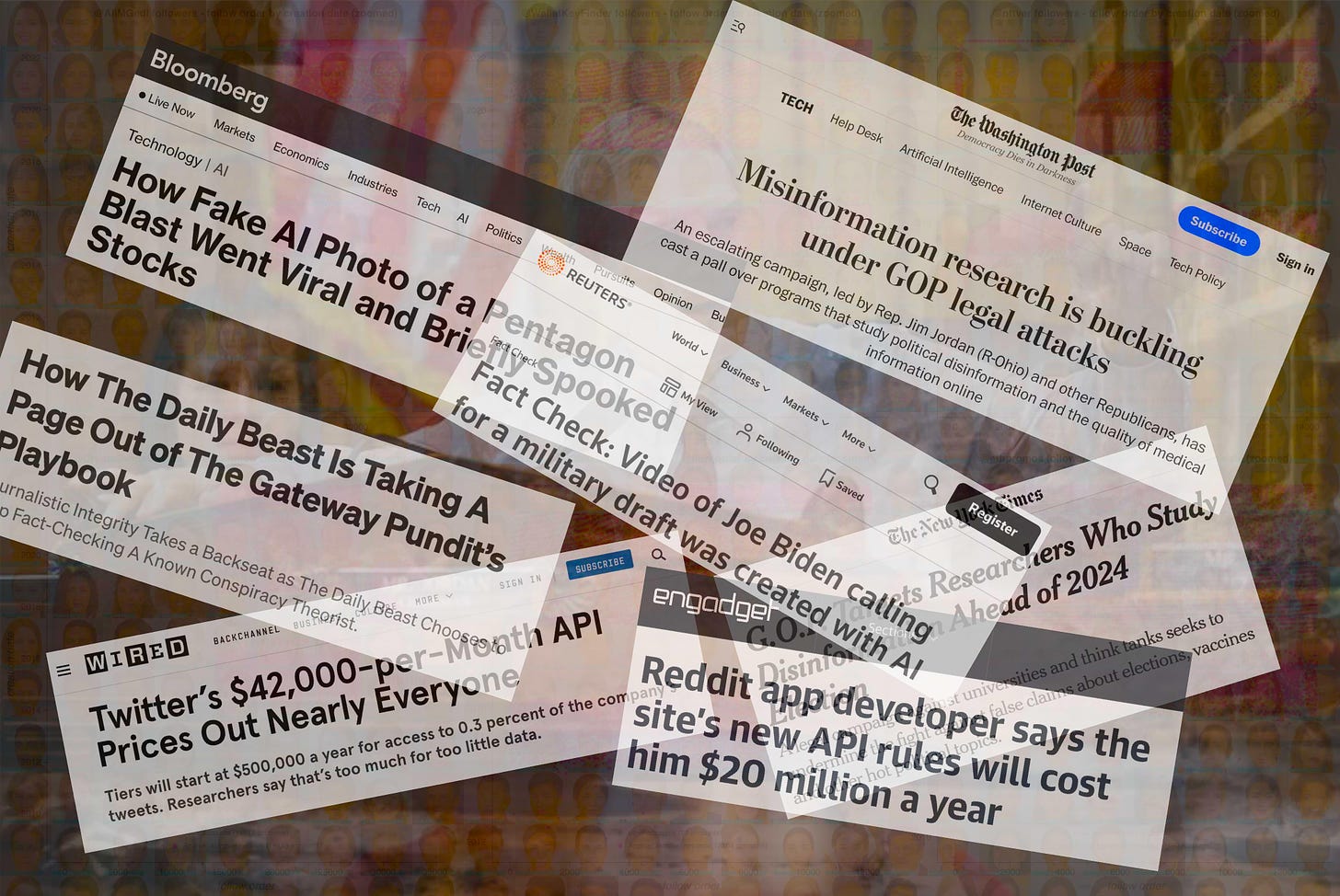

The 2024 U.S. general election is coming up in just under a year, and we can expect that as the election season intensifies, disinformation campaigns and social media manipulation related to the election will intensify as well. In recent election cycles, social media propaganda campaigns have been studied by a variety of researchers, some with academic or other group affiliation and others random denizens of the internet such as myself. While this sort of research will continue as the 2024 election approaches, a perfect storm of confounding factors makes this more difficult than in previous election years. These factors include the following:

A reduction in transparency and data availability by multiple major platforms

Attacks on researchers by powerful government and corporate actors

Increased ease of manufacturing misleading content due to advances in generative AI models

Media publishing inaccurate or outright fake “disinfo research” due to the short supply of legitimate data-driven research

As recently as the 2022 election, multiple major social media platforms offered free or low-cost APIs (application programming interfaces) that allowed independent researchers to query and download public data about posts, accounts, and interactions in standard formats (JSON, CSV, etc). In early 2023, both X (formerly Twitter) and Reddit attached massive price tags to previously free or inexpensive API offerings, effectively putting these APIs out of financial reach for most individuals and institutions conducting social media research. Complicating matters, X has limited manual methods of harvesting this information as well — inspecting an account’s followers or followees now only shows a few dozen as opposed to the entire list, and the label showing what software was used to post a given post (helpful for detecting automated posts) has been removed, to name just two examples. My recommendation, as always, is for the platforms themselves to reverse these changes and provide affordable or free APIs to researchers once again, but since this is unlikely to happen, researchers will simply have to get more creative with data gathering techniques.

Efforts from powerful figures in government, media, and the tech industry to portray social media research as a form of censorship complicate things further. The various authors of the “Twitter Files” (amplified by the company’s new owner) have repeatedly conflated studying social media activity and commenting on suspicious behavior and accounts with censorship, despite the fact that the people doing the studying and commenting have generally not had the power to censor anything. The new GOP majority in the House of Representatives has gone after both academic researchers and tech company employees who study the abuse and manipulation of social media platforms, portraying both as agents of censorship. (This is, ironically, an effort by self-proclaimed “free speech” crusaders to curtail the free speech of those who engage in social media research.)

Complicating matters even further is the rapid rate of technological advancement in the field of generative AI over the last year. While the 2020 and 2022 election cycles both featured rudimentary AI fakes in the form of GAN-generated faces from sites like thispersondoesnotexist.com, 2023 has seen an explosion in more sophisticated synthetic images, video, and text as generative AI improves. Although AI research didn’t create this problem — after all, one can whip up reasonably compelling fake images with Photoshop and a bit of spare time — it does make it drastically easier for pretty much anyone and everyone to create misleading media. Dealing with this will require a combination of increased skepticism and social media literacy on the part of social media users (attempting to corroborate from other sources that an event depicted in a realistic-looking image or video actually happened, for example) and clear platform policies requiring that synthetically generated media be labeled as such.

Finally, there is no guarantee that media coverage (both mainstream and independent) of disinformation and social media manipulation will be based on legitimate research. This has been the case in the past as well, obviously, and coverage of social media research has always suffered a bit due to the desire to present an exciting story, which has resulted in exaggerations of the reach of various influence campaigns and the publication of disputed stories based on papers that have yet to be published. The removal of API access for researchers worsens the situation, however, since there is simply far less legitimate research being produced in the absence of data, resulting in journalists platforming bogus “disinformation research”. This phenomenon has begun veering into Pizzagate territory — in one (still uncorrected) instance, a Daily Beast journalist published a hoax created by a self-styled “disinformation researcher” regarding the manufacture of CSAM, and another “anti-disinfo activist” recently misconstrued an arrest as child trafficking and was amplified by multiple media outlets.

What to do about all of this? I wish I had more concrete advice to offer other than “more skepticism please”, but that’s pretty much what it boils down to in the current online environment — more skepticism on the part of researchers when attempting to draw dramatic conclusions despite lack of access to data, more skepticism from users who encounter outrage-inducing images and videos that may or may not be real, more skepticism from media outlets when they encounter an exciting-looking story based on alleged disinformation research, more skepticism from consumers of media coverage, and above all more skepticism from those in the general public who have been sold on the lie that research is equivalent to censorship.